Zapraszam do artykułu „Sustainable cloud – Zrównoważone podejście do wykorzystania zasobów” mojego autorstwa, który pojawił się również na łamach bloga Deloitte.

Od pewnego czasu modne dziś określenie „Sustainable” zaczęło wkradać się w różne obszary naszego życia. Generalnie jest to podejście skupiające się na takich aspektach jak środowisko oraz kapitał i ekonomia.

Sustainable cloud

Ile płacisz za prąd w swojej serwerowni? W jakim stopniu wykorzystujesz swoją infrastrukturę i czy jest to poziom optymalny? Ile kosztuje Cię praca związana z jej utrzymaniem? Czym zajmuje się na co dzień Twoje IT i w jakim stopniu ich praca rozwija Twój biznes?

Prawdopodobnie na część z tych pytań w ogóle nie znasz odpowiedzi i nie przyszło Ci do głowy, żeby się nad nimi zastanawiać.

Z początku, gdy pierwsi „ziemianie” podejmowali próby oszacowania, ile będzie kosztowało korzystanie z chmury, też nie brali niektórych kosztów pod uwagę, bo i po co? W końcu to dostawca chmury za to płaci (a przynajmniej za część z tych rzeczy).

Jednak dostawca chmury w przeciwieństwie do Ciebie bardzo dokładnie liczy te wszystkie elementy jak prąd, przestrzeń, utrzymanie itp. A po co to robi? By na koniec uwzględnić te koszty w Twoim rachunku za korzystanie z usług chmurowych.

I tu się pojawiło rozczarowanie, że chmura może być droga. I będzie droga wtedy, gdy będziesz próbował przenieść swoje dotychczasowe przyzwyczajenia do chmury.

Płacisz za to, czego używasz

To było pierwsze duże zaskoczenie, gdy nagle co miesiąc trzeba zapłacić za to wszystko co się uruchomiło, bez względu na to, czy z tego korzystamy, czy nie.

I gdzie tu zatem zrównoważone wykorzystanie?

Ono pojawia się dopiero wtedy, gdy zaczniesz przykładać większą uwagę do tego, ile tak naprawdę zasobów jest Ci potrzebne i ile obecnie masz ich uruchomionych. Dla przykładu, jeśli Twoi deweloperzy nie pracują 24/h (a zakładam, że nie), to dlaczego by nie wyłączać tych zasobów wtedy, kiedy nie są używane.

Potrzebujesz to bierz i używaj, nie potrzebujesz to oddaj, to takie proste i oczywiste.

Wniosek „gaś światło na koniec dnia tam, gdzie Cię nie ma”. Używaj tyle ile jest Ci w danym momencie niezbędne. Będzie to korzystne dla środowiska i Twoich finansów.

Tym bardziej, że chmura posiada rozwiązania, które pomagają mądrzej gospodarować zasobami jak np. auto-skalowanie zasobów, monitoring kosztów, automatyzacja itp.

Dodatkowo migrując, czy też tworząc jakieś rozwiązanie w chmurze, jest kilka miejsc w których warto się zatrzymać i zastanowić trochę głębiej.

Sustainable cloud architecture

Kiedy masz przyjemność projektować architekturę nowego rozwiązania, to najlepszy moment na to, aby znów pomyśleć o zrównoważonym wykorzystaniu chmury.

Dobór usług pod konkretne potrzeby oraz ich sposób wykorzystania również wpływa na aspekt tego, jak dużo mocy/zasobów potrzebujesz oraz ile na koniec przyjdzie Ci za to zapłacić.

Czasem tych możliwości i wariantów może być kilka, wie to każdy, kto sprawdzał, na ile sposobów można uruchomić kontenery w chmurze. Chodzi jednak o to, aby na koniec osiągnąć taki kształt architektury, który przy wystarczającym poziomie zasobów i kosztów, pozwalałby maksymalnie osiągnąć oczekiwany efekt.

Dla przykładu, wystarczy spojrzeć na ile sposobów (klas przechowywania danych) możemy utrzymywać dane w usłudze Amazon S3. Na pewno wrzucenie do standardowej klasy 100TB danych, z których rzadko korzystamy, nie jest najlepszym rozwiązaniem, biorąc pod uwagę np. koszt.

Myślę, że to właśnie przy projektowaniu architektury aplikacji jest najwięcej miejsc, w których nasze decyzje będą miały wpływ na:

- Ilość i rodzaj zasobów jakie będziemy potrzebowali.

- Ile za to wszystko przyjdzie nam zapłacić.

- Jaki będzie to miało wpływ na środowisko (więcej zasobów, więcej prądu, ciepła, CO2 itd.)

To wszystko wymaga oczywiście dobrej znajomości usług i platformy chmurowej oraz tego jakie daje możliwości. Wtedy możesz przygotować taką architekturę i dobrać taką konfigurację usług, która pozwoli Ci osiągnąć zamierzony efekt dbając o czynniki ekonomiczne (i oczywiście środowiskowe).

Sustainable cloud operation

Kolejny aspekt, który warto wziąć pod uwagę, to codzienna praca w chmurze, zadania, które są do wykonania, zarówno podczas utrzymywania aplikacji przy życiu, jak i powoływaniu nowych zasobów.

Tutaj duże znaczenie ma oczywiście architektura aplikacji i to z jakich usług chmurowych jest zbudowana. W zależności od typu usług (IaaS, PaaS itp.), będziesz mieć inne rzeczy i zadania, którymi na co dzień będziesz się zajmować.

Na przykład, decyzja o tym, aby zbudować klaster bazodanowy w oparciu o maszyny wirtualne, nie będzie najlepszą opcją, kiedy popatrzymy na to, ile pracy potrzeba na jej utrzymanie. Wybierając bazę danych w modelu usługowym (np. Amazon RDS), duża część konfiguracji i pracy utrzymaniowej jest po stronie dostawcy chmury.

Drugim istotnym faktem jest to, że korzystając z usług, przenosimy na dostawcę odpowiedzialność za zarządzanie utylizacją zasobów infrastruktury. Dla przykładu, uruchamiając aplikacje kontenerowe na AWS Fargate, nie musimy zajmować się zbytnio kwestią wydajności i utylizacji infrastruktury. To jest kolejna zaleta z punktu widzenia zrównoważenia, gdyż pracując na współdzielonych zasobach i na usługach, dostawca zajmuje się tym, aby jak najlepiej wykorzystać fizyczną infrastrukturę.

Po co zatem tracić czas, energię i pieniądze na robienie czegoś, co dostawca na pewno zrobi lepiej. Ty w tym czasie możesz zająć się innymi rzeczami, które mają bezpośrednie przełożenie na biznes.

Inny aspekt to konfiguracja infrastruktury. W raz z nadejściem chmury, mocno rozwinął się aspekt automatyzacji i ogólnego zarządzania konfiguracją w podejściu Infrastructure as Code.

Odpowiednie przygotowanie, opracowanie procesów wdrażania i mechanizmów automatyzacji dla powtarzalnych zadań, to ogromna oszczędność czasu i czasem pieniędzy (nie wspominając o aspektach bezpieczeństwa). Dodatkowo, możesz wypracować optymalne wzorce konfiguracyjne, które mogą spełniać jakieś założenia w odniesieniu do zrównoważonego podejścia.

Należy również pamiętać o cyklicznym przeglądaniu zasobów i stopniu ich utylizacji. Jak pokazuje doświadczenie, prawie zawsze da się znaleźć coś, co można zoptymalizować. Jeśli wchodzisz w świat chmury, to jest to na pewno rzecz, którą trzeba wprowadzić do procesu zarządzania zasobami.

Sustainable application

Tu dochodzimy do jeszcze jednego bardzo ważnego aspektu, a mianowicie aplikacji, którą budujemy i która będzie korzystać z naszej infrastruktury i architektury chmurowej. Aby to wszystko miało sens, to aplikacja, którą budujemy/kodujemy, w dużym skrócie, musi również być świadoma tego, gdzie będzie uruchomiona i z jakich mechanizmów chmurowych będzie korzystać. Tutaj z pomocą może przyjść Ci takie hasło „Cloud Native Application”. Definicja, która określa, jakie założenia powinny przyświecać tworzeniu aplikacji, która będzie wykorzystywać możliwości jakie daje chmura.

Dla przykładu, kiedy w naszej architekturze będą wykorzystywane mechanizmy automatycznego skalowania niektórych z komponentów, to aplikacja nie może być obojętna względem tego, gdyż może to powodować różne problemy w samym jej działaniu. Brak tej świadomości może nas zmusić do zmiany założeń dla architektury, czego konsekwencją może być również brak elastyczności jak również podwyższone koszty.

Druga sprawa, to oczywiście kod aplikacji. Ile to razy widziałeś pielgrzymki do działu infrastruktury z prośbą „dodajcie trochę CPU i RAM, bo aplikacja coś wolno działa…”. Takie podejście możemy oczywiście stosować również w chmurze. Jednak, gdy aplikacja zacznie działać lepiej, to na koniec miesiąca odbije się to na naszym rachunku za chmurę. To wszystko ma dodatkowo wpływ na nadmierne wykorzystanie infrastruktury, prądu i tego wszystkiego, o czym przy zrównoważonym podejściu powinniśmy pamiętać.

Tych rzeczy, o które warto zadbać jest oczywiście dużo więcej. Architektura danych, to w jaki sposób będziemy do nich sięgać, w jakim formacie będą przechowywane, w jaki sposób będziemy tworzyć aplikacje. Czy będą to mikroserwisy uruchamiane w kontenerach, a może zdecydujemy się na podejście „function as a service”. Jest mnóstwo miejsc, w których możemy szukać lepszego i bardziej zrównoważonego wykorzystania zasobów chmurowych.

Dostawcy chmury oferują różnego rodzaju usługi, pomagające na przykład w optymalizacji kodu lub wykrywaniu potencjalnych błędów, które mogą przełożyć się na zwiększone zapotrzebowanie na zasoby. W AWS dla przykładu mamy usługę Amazon CodeGuru, która służy między innymi do analizy pod kątem wyszukiwania nieoptymalnych i wymagających nadmiernej ilości zasobów (i na koniec kosztów) kawałków kodu.

Dlaczego warto to robić?

Kończąc ten wątek, chciałbym zwrócić uwagę na to, co w moim przekonaniu towarzyszy chmurze, właściwie od samego początku. Optymalne i mądre wykorzystanie chmury do realizowana postawionych potrzeb i celów.

Jak popatrzymy na dostawców chmury i ich podejście, od samego początku towarzyszy im zrównoważone korzystanie z zasobów i możliwości, jakie daje technologia w połączeniu z naturą i naszymi umiejętnościami.

Każdy duży dostawca inwestuje mocno w energię odnawialną i już dzisiaj duża część ich fizycznej infrastruktury zasilana jest w ten sposób.

Z racji, że w większości przypadków sami projektują i budują komponenty tej infrastruktury (serwery i inne urządzenia), to mają wiele swobody w tym, aby szukać najbardziej optymalnego wykorzystania zasobów (energia, chłodzenie, przestrzeń itp.). Ponieważ przy ich skali biznesu poprawiając coś o kilka punktów procentowych, skutkuje ogromnymi liczbami w całej skali.

Zatem, skoro oni tak mocno się starają, to warto abyśmy my też zrobili co w naszej mocy, aby mądrze korzystać z zasobów. Dostawcy oczywiście starają się nam w tym pomóc, oferując swoją wiedzę i doświadczenie.

AWS ostatnio rozszerzył swój Well-Architected Framework (zestaw dobrych praktyk pracy z chmurą) o kolejny filar związany właśnie z Sustainability.

Chodzi oto, aby pokazać w jaki sposób różne nasze decyzje i podejście do wykorzystania infrastruktury chmurowej mogą mieć wpływ nie tylko na ten ekologiczny aspekt, ale również na koszty. Duża część rzeczy obraca się mniej więcej wokół tego, o czym tu również wspomniałem.

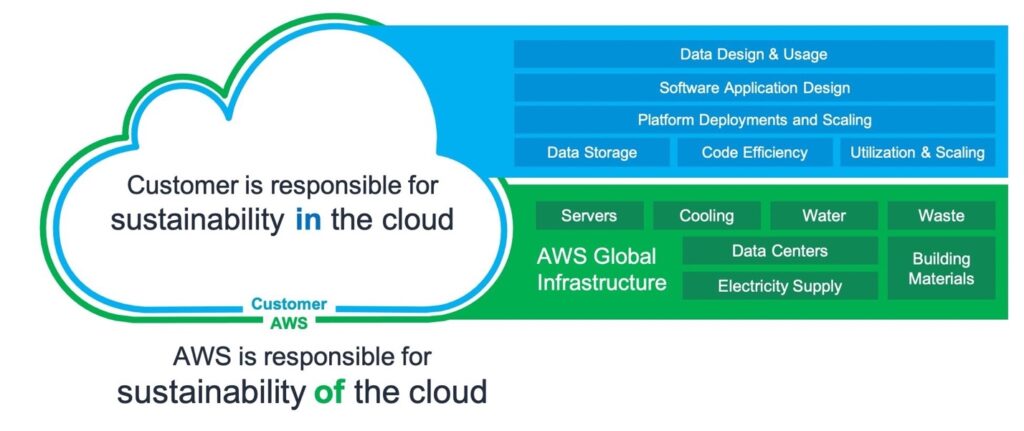

Tak samo jak w przypadku bezpieczeństwa, mamy tutaj tzw. „Shared Responsibity Model”, aby pokazać, gdzie z punktu widzenia zrównoważenia odpowiada i ma wpływ dostawca, a gdzie my.

Tak więc, jeżeli właśnie teraz pracujesz nad architekturą lub konfiguracją, lub kodem jakiegoś rozwiązania, rozejrzyj się dookoła. Pomyśl nie tylko o kosztach jakie Twoje rozwiązanie będzie generowało, czy o korzyściach biznesowych. Zwróć również uwagę na to, jaki odcisk będzie miało Twoje rozwiązanie na środowisko i świat, jaki zostawisz kolejnym pokoleniom.

Cheers!

ARTYKUŁ ORYGINALNIE UKAZAŁ SIĘ NA ŁAMACH BLOGA DELOITTE

„Sustainable cloud Zrównoważone podejście do wykorzystania zasobów”